Understanding the Tension Between Stability and Agility

Legacy systems are not defined by age, but by their resistance to change. A system becomes legacy the moment its maintenance cost exceeds its business value or when its architecture prevents the adoption of modern CI/CD practices. In 2026, many organizations still rely on COBOL-based banking cores or Java 8 monoliths that handle trillions in transactions. These systems are "load-bearing walls"; you cannot simply knock them down without the entire structure collapsing.

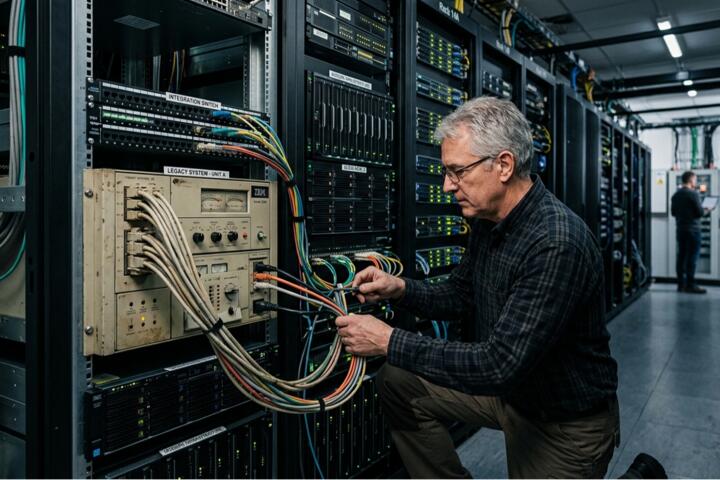

The reality of modern development involves a hybrid state. You are likely running Dockerized microservices alongside a 15-year-old SQL Server instance that refuses to scale horizontally. According to recent industry benchmarks, mid-to-large enterprises spend up to 75% of their IT budget simply "keeping the lights on" for these older frameworks. The goal isn't necessarily to delete the old code, but to build an abstraction layer that allows new features to be deployed at the speed of a startup.

Practical examples include retail giants using mainframes for inventory logic while exposing that data through GraphQL wrappers for mobile apps. One major logistics firm reduced their deployment cycle from six months to two weeks by implementing a "strangler" pattern around their legacy routing engine, proving that you don't need a total rewrite to achieve modern performance metrics.

Common Pain Points in Sustaining Older Codebases

The most frequent mistake leadership makes is treating legacy modernization as a "big bang" project. Attempting to rewrite a decade of business logic in one sprint is a recipe for catastrophic downtime and budget overruns. When teams try to flip the switch all at once, they often find that the "documented" logic doesn't match the actual behavior of the production code.

Another critical failure point is the "Technical Debt Trap." When developers work on legacy systems, they often apply quick patches (band-aids) instead of addressing the underlying architectural rot. This leads to a fragility where a change in the billing module unexpectedly breaks the user authentication flow. The lack of automated testing in these systems means manual QA becomes a massive bottleneck.

The consequences are measurable. Research indicates that developers working on poorly managed legacy systems are 40% less productive than those in modernized environments. Furthermore, security vulnerabilities often go unpatched because the dependencies are so old that modern scanners (like Snyk or GitHub Advanced Security) cannot even parse them, leaving the organization exposed to exploits that have been "fixed" in the industry for years.

Solutions and Actionable Recommendations

Implement the Strangler Fig Pattern

Instead of replacing the old system, you build new functionality around it. When a specific feature needs an update, you rewrite only that piece as a modern service.

-

Why it works: It minimizes risk. If the new service fails, the old system is still there as a fallback.

-

In Practice: Use an API Gateway (like Kong or Apigee) to intercept calls. Route 5% of traffic to the new microservice and 95% to the legacy core, increasing the ratio as confidence grows.

-

Tools: NGINX, Amazon API Gateway, Istio Service Mesh.

Introduce Observability Without Code Changes

You cannot fix what you cannot measure. Legacy systems are often "black boxes."

-

What to do: Deploy sidecar proxies or agent-based monitoring that hooks into the OS or network level rather than the application code.

-

Results: One financial institution used New Relic agents to identify that 60% of their legacy latency was caused by a single unindexed database query, not the code itself.

-

Tools: Datadog, Prometheus, OpenTelemetry.

Automated Regression Testing via "Golden Master"

Since legacy code often lacks unit tests, use characterization testing.

-

What to do: Capture the output of the legacy system for a given input (the "Golden Master"). After making a change, run the same input and ensure the output remains identical.

-

Why it works: It provides a safety net for refactoring without requiring a deep understanding of the original spaghetti code.

-

Tools: ApprovalTests, VCR (for Ruby), or custom snapshot scripts.

Containerization as a First Step

Before rewriting, wrap the legacy app in a container.

-

What to do: Move the application from a manual VM setup to a Docker image. This standardizes the environment across dev, staging, and production.

-

Results: This eliminates the "it works on my machine" syndrome and allows the legacy app to participate in modern CI/CD pipelines via Jenkins or GitLab CI.

Real-World Mini-Case Examples

Case 1: Global Insurance Provider

The Problem: An insurance provider used a 20-year-old Delphi application for claims processing. New regulations required mobile integration, but the app had no API.

The Solution: The team used RPA (Robotic Process Automation) via UiPath to bridge the gap initially, while simultaneously building a RESTful wrapper using Node.js that communicated directly with the underlying MS SQL database.

Result: They launched a mobile claims app in 4 months. API response times improved by 300% after migrating the database to Amazon RDS.

Case 2: E-commerce Backend Migration

The Problem: A high-traffic retailer had a PHP 5.6 monolith that crashed during Black Friday.

The Solution: They migrated the "Search" and "Checkout" functions to Go-based microservices using the Strangler Fig pattern. They kept the "User Profile" and "Order History" on the legacy system.

Result: During the next peak event, the site handled 5x the traffic with zero downtime, as the Go services scaled elastically on Kubernetes while the PHP monolith remained stable under a reduced load.

Comparison of Modernization Strategies

| Strategy | Risk Level | Cost | Speed of Value | Best For |

| Encapsulation (API Wrapper) | Low | Low | Very Fast | Adding mobile/web frontends to old logic |

| Replatforming (Lift & Shift to Cloud) | Medium | Medium | Medium | Reducing data center costs |

| Refactoring (Strangler Fig) | Medium | High | Incremental | Long-term evolution of core logic |

| Rebuilding (Total Rewrite) | Extremely High | Very High | Very Slow | Systems that are technically unsalvageable |

Common Pitfalls and Mitigation

A frequent error is "Resume-Driven Development." This happens when engineers choose a complex new technology (like a highly experimental Rust framework) to replace a stable legacy system simply because they want to learn it. This adds unnecessary complexity. Always choose boring, stable technologies (Java/Spring Boot, Python/FastAPI) for the replacement to ensure long-term maintainability.

Another mistake is ignoring the database schema. Code is easy to change; data is hard. If you try to change the application logic but keep a 400-table relational database with no foreign keys, the new code will be just as fragile as the old code. Start by cleaning up the data layer or implementing a Data Abstraction Layer (DAL) to decouple the code from the messy schema.

Finally, don't forget the "People" factor. The engineers who wrote the original system often feel protective of it. Involving them in the modernization process is vital. If you treat the old code as "trash," you lose the institutional knowledge required to understand why certain (seemingly weird) logic was implemented in the first place.

FAQ

How do I justify the cost of legacy modernization to stakeholders?

Focus on "Risk of Inaction." Calculate the cost of a 4-hour outage or the salary cost of developers waiting for manual deployments. Show how modernization reduces the Total Cost of Ownership (TCO) over 24 months.

Should we move to microservices immediately?

No. Often, a "Modular Monolith" is a better middle ground. It allows you to clean up the code and define clear boundaries without the massive operational overhead of managing a distributed Kubernetes cluster.

What if there is no documentation for the legacy system?

Use discovery tools and network sniffers to map out how the system communicates. Tools like Dynatrace can auto-generate topology maps to show you which services talk to which databases.

Is COBOL still relevant in 2026?

Yes, specifically in FinTech. The goal there is usually "Coexistence." You don't replace the COBOL; you use IBM z/OS Connect to expose those mainframe functions as modern REST APIs.

Can AI help with legacy code?

Yes. Large Language Models (LLMs) are excellent at explaining what a 300-line legacy function does or writing unit tests for it. However, never let AI rewrite core logic without strict human oversight and automated verification.

Author’s Insight

In my fifteen years of architectural consulting, I’ve found that the most successful projects aren't the ones that use the flashiest tech. They are the ones that respect the legacy system's "intent." I once saw a team spend $2M trying to replace an old C++ engine with Spark, only to realize the C++ engine was actually faster for their specific math. My advice: don't move things just to move them. Identify the bottleneck, wrap it, and only then replace it when the business case is undeniable.

Conclusion

Managing legacy systems in modern environments requires a shift from "replacement" thinking to "evolutionary" thinking. By employing patterns like API encapsulation, the Strangler Fig approach, and modern observability, you can unlock the value trapped in older codebases without risking the stability of your operations. Start by containerizing your existing apps and establishing a "Golden Master" test suite. This provides the safety net needed to begin incremental refactoring. Your next step should be a technical audit to identify the single most expensive module to maintain and targeting it for a pilot "Strangler" service.